Investigating IRL during Rapid Aiming Movement in XR and HRI

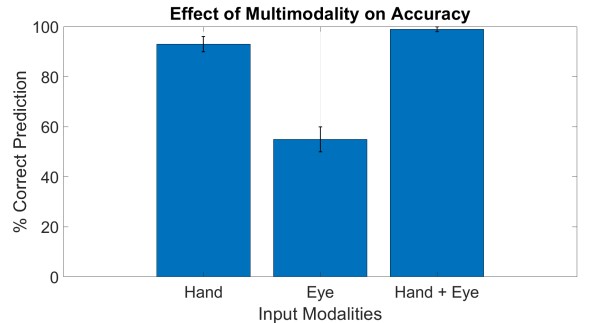

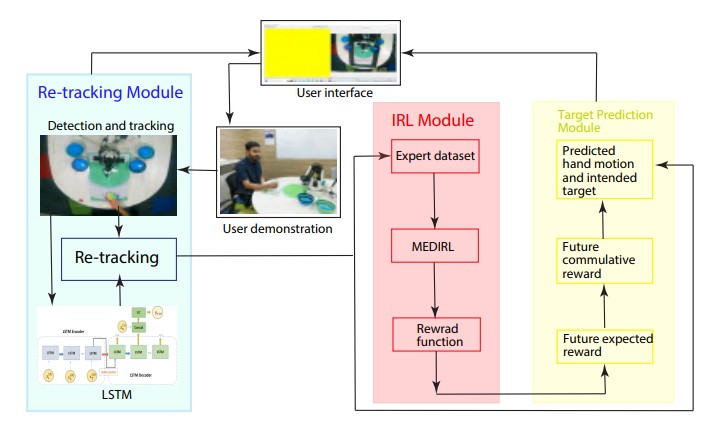

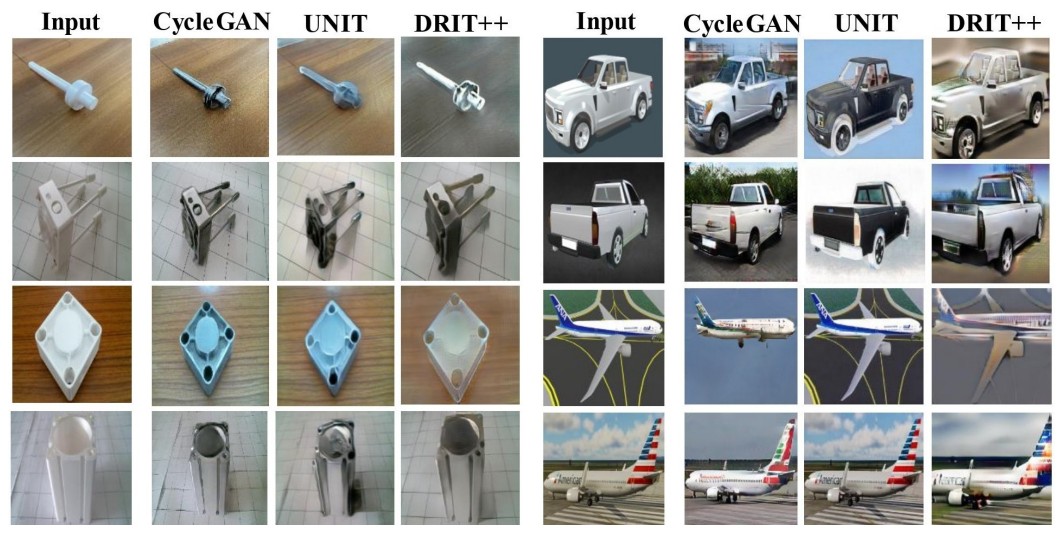

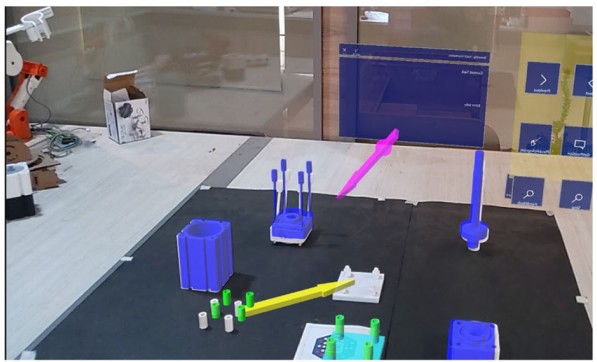

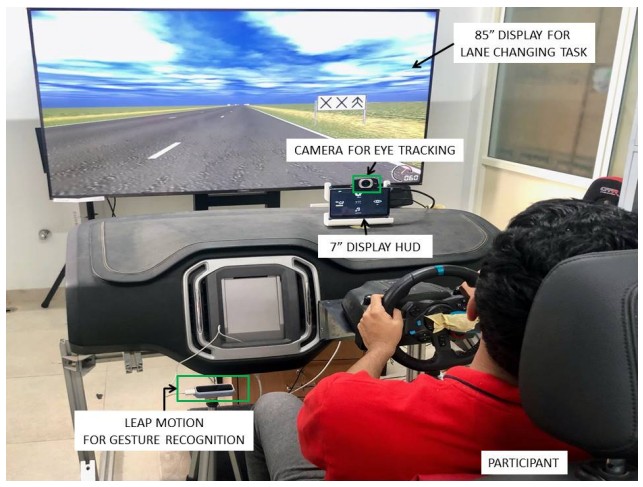

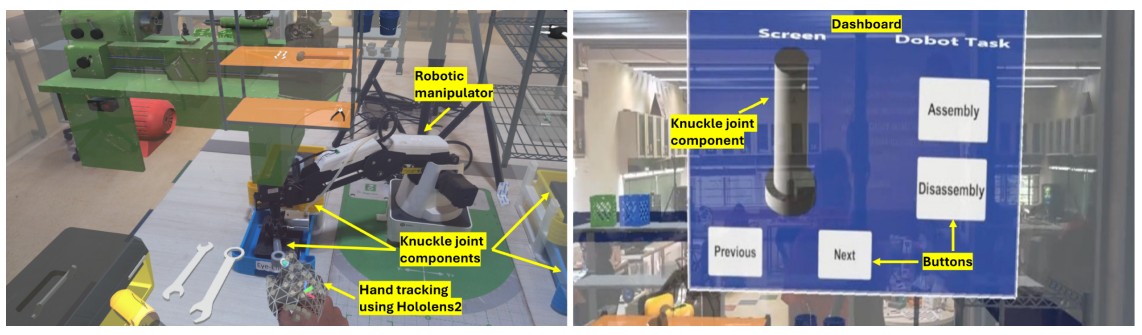

Explores how Inverse Reinforcement Learning can improve system understanding of human intent during rapid task execution in both virtual and collaborative robotic settings.