Research Objective

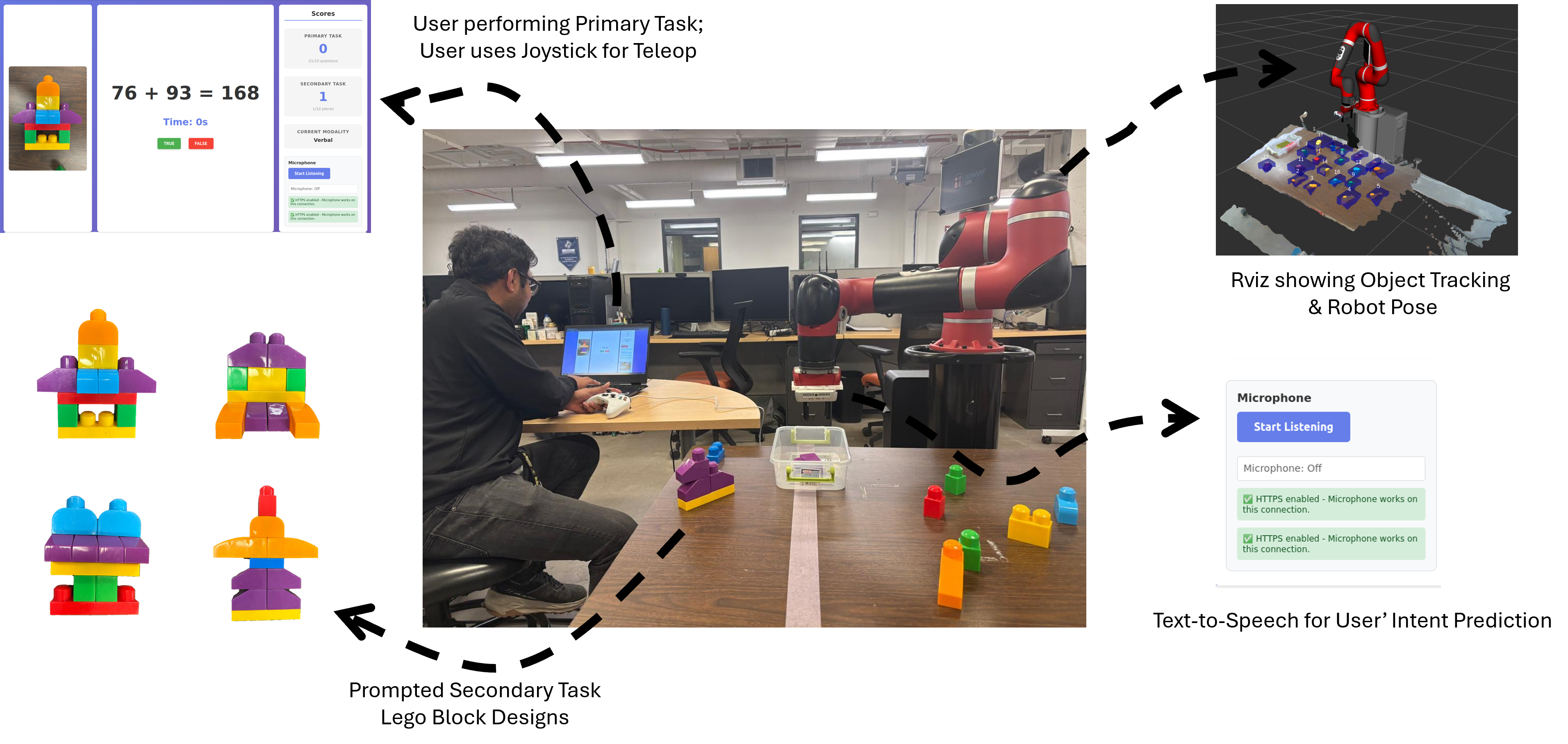

In high-stakes environments, users often need to interact with robotic systems while managing secondary cognitive loads. This study explores how different interaction modalities — specifically speech, eye gaze, and hand gestures — impact a user's mental workload (quantified via NASA-TLX) and their primary task performance.

Study Methodology

Experimental Design

- Single vs. Dual Task: Participants performed robotic manipulation both with and without secondary cognitive distractors.

- NASA Task Load Index (TLX): Used to measure subjective workload across six dimensions: mental demand, physical demand, temporal demand, performance, effort, and frustration level.

- Modalities Tested: Evaluated the trade-offs between high-precision gaze tracking and high-flexibility speech commands.

Key Findings

Our results indicated that speech-based interaction consistently lowered the perceived temporal demand and frustration during dual-task scenarios compared to visual-intensive gaze modalities. However, gaze-based selection remained superior for high-precision, low-latency target acquisition in single-task environments. This suggests that "Accessible HRI" must be context-adaptive, shifting modalities based on the user's current cognitive state.