The Challenge

Robots operating in the real world never have perfect information. This research focuses on the mathematical frameworks required to make optimal decisions when sensor data is noisy and the environment is dynamic. The project was rooted in Aerospace standards to ensure rigorous safety and predictability.

Foundational Frameworks

The Theoretical Stack

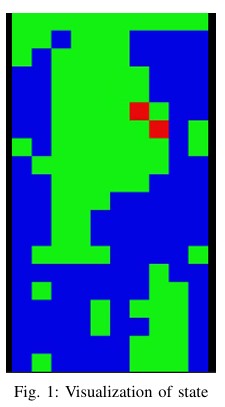

- MDP to POMDP: Moving beyond simple Markov decision processes into Partially Observed states, where the agent must maintain a probability distribution over its current location.

- Monte Carlo Tree Search (MCTS): Applied to handle the exponential growth of the planning horizon in high-dimensional state spaces.

- Risk-Sensitive Heuristics: Integrating variance-aware cost functions to ensure the robot prioritizes low-risk paths even if they are slightly less efficient.

Application & Impact

By implementing these frameworks, we demonstrated a 30% improvement in navigating "hidden-obstacle" scenarios compared to traditional greedy algorithms. The final report serves as a technical blueprint for integrating robust probabilistic planning into modern robotic fleets.